IEEE Account

- Change Username/Password

- Update Address

Purchase Details

- Payment Options

- Order History

- View Purchased Documents

Profile Information

- Communications Preferences

- Profession and Education

- Technical Interests

- US & Canada: +1 800 678 4333

- Worldwide: +1 732 981 0060

- Contact & Support

- About IEEE Xplore

- Accessibility

- Terms of Use

- Nondiscrimination Policy

- Privacy & Opting Out of Cookies

A not-for-profit organization, IEEE is the world's largest technical professional organization dedicated to advancing technology for the benefit of humanity. © Copyright 2024 IEEE - All rights reserved. Use of this web site signifies your agreement to the terms and conditions.

Data Science

Featured article, related topics, top conferences on data science, top videos on data science.

Xplore Articles related to Data Science

Periodicals related to data science, e-books related to data science, courses related to data science, top organizations on data science, most published xplore authors for data science.

Effect of Data Characteristics Inconsistency on Medium and Long-Term Runoff Forecasting by Machine Learning

Share this page:

In the application of medium and long-term runoff forecasting, machine learning has some problems, such as high learning cost, limited computing cost, and difficulty in satisfying statistical data assumptions in some regions, leading to difficulty in popularization in the hydrology industry. In the case of a few data, it is one of the ways to solve the problem to analyze the data characteristics consistency. This paper analyzes the statistical hypothesis of machine learning and runoff data characteristics such as periodicity and mutation. Aiming at the effect of data characteristics inconsistency on three representative machine learning models (multiple linear regression, random forest, back propagation neural network), a simple correction/improvement method suitable for engineering was proposed. The model results were verified in the Danjiangkou area, China. The results show that the errors of the three models have the same distribution as the periodic characteristics of the runoff periods, and the correction/improvement based on periodicity and mutation characteristics can improve the forecasting accuracy of the three models. The back propagation neural network model is most sensitive to the data characteristics consistency.

View this article on IEEE Xplore

Efficiency Optimization Design That Considers Control of Interior Permanent Magnet Synchronous Motors Based on Machine Learning for Automotive Application

Interior permanent magnet synchronous motors have become widely used as traction motors in environmentally friendly vehicles. Interior permanent magnet synchronous motors have a high degree of design freedom and time-consuming finite element analysis is required for their characteristics analysis, which results in a long design period. Here, we propose a method for fast efficiency maximization design that uses a machine-learning-based surrogate model. The surrogate model predicts motor parameters and iron loss with the same accuracy as that of finite element analysis but in a much shorter time. Furthermore, using the current and speed conditions in addition to geometry information as input to the surrogate model enables design optimization that considers motor control. The proposed method completed multi-objective multi-constraint optimization for multi-dimensional geometric parameters, which is prohibitively time-consuming using finite element analysis, in a few hours. The proposed shapes reduced losses under a vehicle test cycle compared with the initial shape. The proposed method was applied to motors with three rotor topologies to verify its generality.

Published in the IEEE Vehicular Technology Society Section

An Intelligent IoT Sensing System for Rail Vehicle Running States Based on TinyML

Real-time identification of the running state is one of the key technologies for a smart rail vehicle. However, it is a challenge to accurately real-time sense the complex running states of the rail vehicle on an Internet-of-Things (IoT) edge device. Traditional systems usually upload a large amount of real-time data from the vehicle to the cloud for identification, which is laborious and inefficient. In this paper, an intelligent identification method for rail vehicle running state is proposed based on Tiny Machine Learning (TinyML) technology, and an IoT system is developed with small size and low energy consumption. The system uses a Micro-Electro-Mechanical System (MEMS) sensor to collect acceleration data for machine learning training. A neural network model for recognizing the running state of rail vehicles is built and trained by defining a machine learning running state classification model. The trained recognition model is deployed to the IoT edge device at the vehicle side, and an offset time window method is utilized for real-time state sensing. In addition, the sensing results are uploaded to the IoT server for visualization. The experiments on the subway vehicle showed that the system could identify six complex running states in real-time with over 99% accuracy using only one IoT microcontroller. The model with three axes converges faster than the model with one. The model recognition accuracy remained above 98% and 95%, under different installation positions on the rail vehicle and the zero-drift phenomenon of the MEMS acceleration sensor, respectively. The presented method and system can also be extended to edge-aware applications of equipment such as automobiles and ships.

Code Generation Using Machine Learning: A Systematic Review

Recently, machine learning (ML) methods have been used to create powerful language models for a broad range of natural language processing tasks. An important subset of this field is that of generating code of programming languages for automatic software development. This review provides a broad and detailed overview of studies for code generation using ML. We selected 37 publications indexed in arXiv and IEEE Xplore databases that train ML models on programming language data to generate code. The three paradigms of code generation we identified in these studies are description-to-code, code-to-description, and code-to-code. The most popular applications that work in these paradigms were found to be code generation from natural language descriptions, documentation generation, and automatic program repair, respectively. The most frequently used ML models in these studies include recurrent neural networks, transformers, and convolutional neural networks. Other neural network architectures, as well as non-neural techniques, were also observed. In this review, we have summarized the applications, models, datasets, results, limitations, and future work of 37 publications. Additionally, we include discussions on topics general to the literature reviewed. This includes comparing different model types, comparing tokenizers, the volume and quality of data used, and methods for evaluating synthesized code. Furthermore, we provide three suggestions for future work for code generation using ML.

Combining Citation Network Information and Text Similarity for Research Article Recommender Systems

Researchers often need to gather a comprehensive set of papers relevant to a focused topic, but this is often difficult and time-consuming using existing search methods. For example, keyword searching suffers from difficulties with synonyms and multiple meanings. While some automated research-paper recommender systems exist, these typically depend on either a researcher’s entire library or just a single paper, resulting in either a quite broad or a quite narrow search. With these issues in mind, we built a new research-paper recommender system that utilizes both citation information and textual similarity of abstracts to provide a highly focused set of relevant results. The input to this system is a set of one or more related papers, and our system searches for papers that are closely related to the entire set. This framework helps researchers gather a set of papers that are closely related to a particular topic of interest, and allows control over which cross-section of the literature is located. We show the effectiveness of this recommender system by using it to recreate the references of review papers. We also show its utility as a general similarity metric between scientific articles by performing unsupervised clustering on sets of scientific articles. We release an implementation, ExCiteSearch (bitbucket.org/mmmontemore/excitesearch), to allow researchers to apply this framework to locate relevant scientific articles.

Novel Multi Center and Threshold Ternary Pattern Based Method for Disease Detection Method Using Voice

Smart health is one of the most popular and important components of smart cities. It is a relatively new context-aware healthcare paradigm influenced by several fields of expertise, such as medical informatics, communications and electronics, bioengineering, ethics, to name a few. Smart health is used to improve healthcare by providing many services such as patient monitoring, early diagnosis of disease and so on. The artificial neural network (ANN), support vector machine (SVM) and deep learning models, especially the convolutional neural network (CNN), are the most commonly used machine learning approaches where they proved to be performance in most cases. Voice disorders are rapidly spreading especially with the development of medical diagnostic systems, although they are often underestimated. Smart health systems can be an easy and fast support to voice pathology detection. The identification of an algorithm that discriminates between pathological and healthy voices with more accuracy is needed to obtain a smart and precise mobile health system. The main contribution of this paper consists of proposing a multiclass-pathologic voice classification using a novel multileveled textural feature extraction with iterative feature selector. Our approach is a simple and efficient voice-based algorithm in which a multi-center and multi threshold based ternary pattern is used (MCMTTP). A more compact multileveled features are then obtained by sample-based discretization techniques and Neighborhood Component Analysis (NCA) is applied to select features iteratively. These features are finally integrated with MCMTTP to achieve an accurate voice-based features detection. Experimental results of six classifiers with three diagnostic diseases (frontal resection, cordectomy and spastic dysphonia) show that the fused features are more suitable for describing voice-based disease detection.

*Published in the IEEE Electronics Packaging Society Section within IEEE Access .

Machine Learning Empowered Spectrum Sharing in Intelligent Unmanned Swarm Communication Systems: Challenges, Requirements and Solutions

The unmanned swarm system (USS) has been seen as a promising technology, and will play an extremely important role in both the military and civilian fields such as military strikes, disaster relief and transportation business. As the “nerve center” of USS, the unmanned swarm communication system (USCS) provides the necessary information transmission medium so as to ensure the system stability and mission implementation. However, challenges caused by multiple tasks, distributed collaboration, high dynamics, ultra-dense and jamming threat make it hard for USCS to manage limited spectrum resources. To tackle with such problems, the machine learning (ML) empowered intelligent spectrum management technique is introduced in this paper. First, based on the challenges of the spectrum resource management in USCS, the requirement of spectrum sharing is analyzed from the perspective of spectrum collaboration and spectrum confrontation. We found that suitable multi-agent collaborative decision making is promising to realize effective spectrum sharing in both two perspectives. Therefore, a multi-agent learning framework is proposed which contains mobile-computing-assisted and distributed structures. Based on the framework, we provide case studies. Finally, future research directions are discussed.

Harnessing Artificial Intelligence Capabilities to Improve Cybersecurity

Cybersecurity is a fast-evolving discipline that is always in the news over the last decade, as the number of threats rises and cybercriminals constantly endeavor to stay a step ahead of law enforcement. Over the years, although the original motives for carrying out cyberattacks largely remain unchanged, cybercriminals have become increasingly sophisticated with their techniques. Traditional cybersecurity solutions are becoming inadequate at detecting and mitigating emerging cyberattacks. Advances in cryptographic and Artificial Intelligence (AI) techniques (in particular, machine learning and deep learning) show promise in enabling cybersecurity experts to counter the ever-evolving threat posed by adversaries. Here, we explore AI’s potential in improving cybersecurity solutions, by identifying both its strengths and weaknesses. We also discuss future research opportunities associated with the development of AI techniques in the cybersecurity field across a range of application domains.

A Study on the Elimination of Thermal Reflections

Recently, thermal cameras have been used in various surveillance and monitoring systems. In particular, in camera-based surveillance systems, algorithms are being developed for detecting and recognizing objects from images acquired in dark environments. However, it is difficult to detect and recognize an object due to the thermal reflections generated in the image obtained from a thermal camera. For example, thermal reflection often occurs on a structure or the floor near an object, similar to shadows or mirror reflections. In this case, the object and the areas of thermal reflection overlap or are connected to each other and are difficult to separate. Thermal reflection also occurs on nearby walls, which can be detected as artifacts when an object is not associated with this phenomenon. In addition, the size and pixel value of the thermal reflection area vary greatly depending on the material of the area and the environmental temperature. In this case, the patterns and pixel values of the thermal reflection and the object are similar to each other and difficult to differentiate. These problems reduce the accuracy of object detection and recognition methods. In addition, no studies have been conducted on the elimination of thermal reflection of objects under different environmental conditions. Therefore, to address these challenges, we propose a method of detecting reflections in thermal images based on deep learning and their elimination via post-processing. Experiments using a self-collected database (Dongguk thermal image database (DTh-DB), Dongguk items and vehicles database (DI&V-DB)) and an open database showed that the performance of the proposed method is superior compared to that of other state-of-the-art approaches.

Machine Learning Designs, Implementations and Techniques

Submission Deadline: 15 February 2020

IEEE Access invites manuscript submissions in the area of Machine Learning Designs, Implementations and Techniques.

Most modern machine learning research is devoted to improving the accuracy of prediction. However, less attention is paid to deployment of machine and deep learning systems, supervised /unsupervised techniques for mining healthcare data, and time series similarity and irregular temporal data analysis. Most deployments are in the cloud, with abundant and scalable resources, and a free choice of computation platform. However, with the advent of intelligent physical devices—such as intelligent robots or self-driven cars—the resources are more limited, and the latency may be strictly bounded.

To address these questions, the focus of this Special Section in IEEE Access is on machine and deep learning designs, implementations and techniques, including both system level topics and other research questions related to the general use and framework of machine learning algorithms.

The topics of interest include, but are not limited to:

- Real time implementation of machine and deep learning,

- System level implementation, considering full pipeline from raw data until the decision layer

- Novel and innovative applications with strong emphasis on design and implementation

- Novel approaches for Temporal / Spatial/Spatio-Temporal Association analysis

- Pattern discovery from Time stamped Temporal and Interval databases

- High performance data mining in cloud

- Novel approaches for handling Uncertain and Imbalanced data

- Supervised/Unsupervised techniques for mining healthcare data

- Deep learning for translational bio-informatics

- Periodic/Sequential pattern mining

- Evolutionary algorithms

- Privacy-Preserving Data mining

- Time series similarity and Irregular temporal data analysis

- Mining Text Web and Social network data

- Imputation techniques for Temporal data

- Causality and Event Processing

- Applications of Data Mining in Anomaly and Intrusion detection

- Applications to medical informatics

We also highly recommend the submission of multimedia with each article as it significantly increases the visibility, downloads, and citations of articles.

Associate Editor: Shadi A. Aljawarneh, Jordan University of Science and Technology, Jordan

Guest Editors:

- Oguz Bayat, Altinbas University, Turkey

- Juan A. Lara, Madrid Open University, Udima, Spain

- Robert P. Schumaker, University of Texas at Tyler, USA

Relevant IEEE Access Special Sections:

- Visual Analysis for CPS Data

- Emerging Approaches to Cyber Security

- Data-Enabled Intelligence for Digital Health

IEEE Access Editor-in-Chief: Prof. Derek Abbott, University of Adelaide

Article submission: Contact Associate Editor and submit manuscript to: http://ieee.atyponrex.com/journal/ieee-access

For inquiries regarding this Special Section, please contact: [email protected] , [email protected] .

At a Glance

- Journal: IEEE Access

- Format: Open Access

- Frequency: Continuous

- Submission to Publication: 4-6 weeks (typical)

- Topics: All topics in IEEE

- Average Acceptance Rate: 27%

- Impact Factor: 3.4

- Model: Binary Peer Review

- Article Processing Charge: US $1,995

Featured Articles

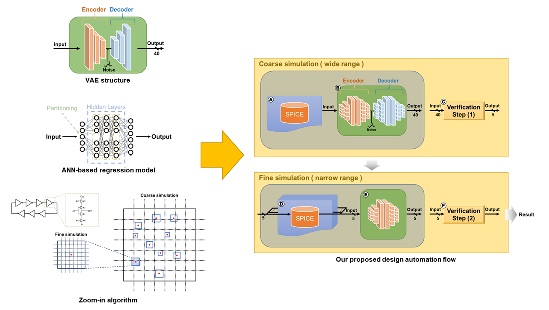

AMS Circuit Design Optimization Technique Based on ANN Regression Model With VAE Structure

View in IEEE Xplore

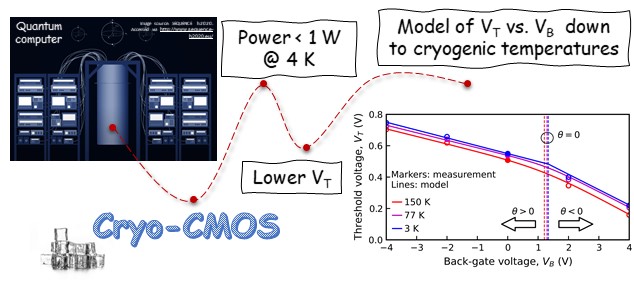

Novel Approach to FDSOI Threshold Voltage Model Validated at Cryogenic Temperatures

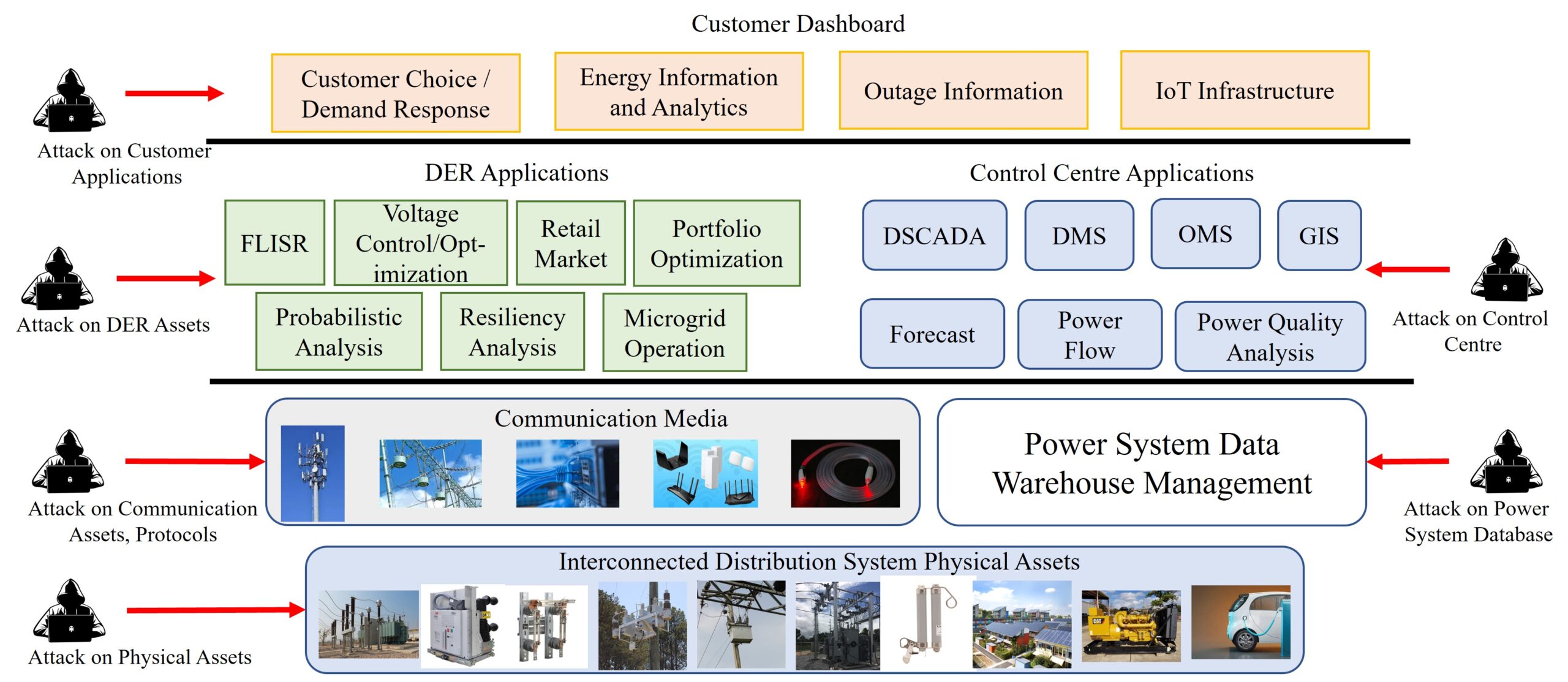

On the Cyber-Physical Needs of DER-Based Voltage Control/Optimization Algorithms in Active Distribution Network

Submission guidelines.

© 2024 IEEE - All rights reserved. Use of this website signifies your agreement to the IEEE TERMS AND CONDITIONS.

A not-for-profit organization, IEEE is the world’s largest technical professional organization dedicated to advancing technology for the benefit of humanity.

AWARD RULES:

NO PURCHASE NECESSARY TO ENTER OR WIN. A PURCHASE WILL NOT INCREASE YOUR CHANCES OF WINNING.

These rules apply to the “2024 IEEE Access Best Video Award Part 1″ (the “Award”).

- Sponsor: The Sponsor of the Award is The Institute of Electrical and Electronics Engineers, Incorporated (“IEEE”) on behalf of IEEE Access , 445 Hoes Lane, Piscataway, NJ 08854-4141 USA (“Sponsor”).

- Eligibility: Award is open to residents of the United States of America and other countries, where permitted by local law, who are the age of eighteen (18) and older. Employees of Sponsor, its agents, affiliates and their immediate families are not eligible to enter Award. The Award is subject to all applicable state, local, federal and national laws and regulations. Entrants may be subject to rules imposed by their institution or employer relative to their participation in Awards and should check with their institution or employer for any relevant policies. Void in locations and countries where prohibited by law.

- Agreement to Official Rules : By participating in this Award, entrants agree to abide by the terms and conditions thereof as established by Sponsor. Sponsor reserves the right to alter any of these Official Rules at any time and for any reason. All decisions made by Sponsor concerning the Award including, but not limited to the cancellation of the Award, shall be final and at its sole discretion.

- How to Enter: This Award opens on January 1, 2024 at 12:00 AM ET and all entries must be received by 11:59 PM ET on June 30, 2024 (“Promotional Period”).

Entrant must submit a video with an article submission to IEEE Access . The video submission must clearly be relevant to the submitted manuscript. Only videos that accompany an article that is accepted for publication in IEEE Access will qualify. The video may be simulations, demonstrations, or interviews with other experts, for example. Your video file should not exceed 100 MB.

Entrants can enter the Award during Promotional Period through the following method:

- The IEEE Author Portal : Entrants can upload their video entries while submitting their article through the IEEE Author Portal submission site .

- Review and Complete the Terms and Conditions: After submitting your manuscript and video through the IEEE Author Portal, entrants should then review and sign the Terms and Conditions .

Entrants who have already submitted a manuscript to IEEE Access without a video can still submit a video for inclusion in this Award so long as the video is submitted within 7 days of the article submission date. The video can be submitted via email to the article administrator. All videos must undergo peer review and be accepted along with the article submission. Videos may not be submitted after an article has already been accepted for publication.

The criteria for an article to be accepted for publication in IEEE Access are:

- The article must be original writing that enhances the existing body of knowledge in the given subject area. Original review articles and surveys are acceptable even if new data/concepts are not presented.

- Results reported must not have been submitted or published elsewhere (although expanded versions of conference publications are eligible for submission).

- Experiments, statistics, and other analyses must be performed to a high technical standard and are described in sufficient detail.

- Conclusions must be presented in an appropriate fashion and are supported by the data.

- The article must be written in standard English with correct grammar.

- Appropriate references to related prior published works must be included.

- The article must fall within the scope of IEEE Access

- Must be in compliance with the IEEE PSPB Operations Manual.

- Completion of the required IEEE intellectual property documents for publication.

- At the discretion of the IEEE Access Editor-in-Chief.

- Disqualification: The following items will disqualify a video from being considered a valid submission:

- The video is not original work.

- A video that is not accompanied with an article submission.

- The article and/or video is rejected during the peer review process.

- The article and/or video topic does not fit into the scope of IEEE Access .

- The article and/or do not follow the criteria for publication in IEEE Access .

- Videos posted in a comment on IEEE Xplore .

- Content is off-topic, offensive, obscene, indecent, abusive or threatening to others.

- Infringes the copyright, trademark or other right of any third party.

- Uploads viruses or other contaminating or destructive features.

- Is in violation of any applicable laws or regulations.

- Is not in English.

- Is not provided within the designated submission time.

- Entrant does not agree and sign the Terms and Conditions document.

Entries must be original. Entries that copy other entries, or the intellectual property of anyone other than the Entrant, may be removed by Sponsor and the Entrant may be disqualified. Sponsor reserves the right to remove any entry and disqualify any Entrant if the entry is deemed, in Sponsor’s sole discretion, to be inappropriate.

- Entrant’s Warranty and Authorization to Sponsor: By entering the Award, entrants warrant and represent that the Award Entry has been created and submitted by the Entrant. Entrant certifies that they have the ability to use any image, text, video, or other intellectual property they may upload and that Entrant has obtained all necessary permissions. IEEE shall not indemnify Entrant for any infringement, violation of publicity rights, or other civil or criminal violations. Entrant agrees to hold IEEE harmless for all actions related to the submission of an Entry. Entrants further represent and warrant, if they reside outside of the United States of America, that their participation in this Award and acceptance of a prize will not violate their local laws.

- Intellectual Property Rights: Entrant grants Sponsor an irrevocable, worldwide, royalty free license to use, reproduce, distribute, and display the Entry for any lawful purpose in all media whether now known or hereinafter created. This may include, but is not limited to, the IEEE A ccess website, the IEEE Access YouTube channel, the IEEE Access IEEE TV channel, IEEE Access social media sites (LinkedIn, Facebook, Twitter, IEEE Access Collabratec Community), and the IEEE Access Xplore page. Facebook/Twitter/Microsite usernames will not be used in any promotional and advertising materials without the Entrants’ expressed approval.

- Number of Prizes Available, Prizes, Approximate Retail Value and Odds of winning Prizes: Two (2) promotional prizes of $350 USD Amazon gift cards. One (1) grand prize of a $500 USD Amazon gift card. Prizes will be distributed to the winners after the selection of winners is announced. Odds of winning a prize depend on the number of eligible entries received during the Promotional Period. Only the corresponding author of the submitted manuscript will receive the prize.

The grand prize winner may, at Sponsor’ discretion, have his/her article and video highlighted in media such as the IEEE Access Xplore page and the IEEE Access social media sites.

The prize(s) for the Award are being sponsored by IEEE. No cash in lieu of prize or substitution of prize permitted, except that Sponsor reserves the right to substitute a prize or prize component of equal or greater value in its sole discretion for any reason at time of award. Sponsor shall not be responsible for service obligations or warranty (if any) in relation to the prize(s). Prize may not be transferred prior to award. All other expenses associated with use of the prize, including, but not limited to local, state, or federal taxes on the Prize, are the sole responsibility of the winner. Winner(s) understand that delivery of a prize may be void where prohibited by law and agrees that Sponsor shall have no obligation to substitute an alternate prize when so prohibited. Amazon is not a sponsor or affiliated with this Award.

- Selection of Winners: Promotional prize winners will be selected based on entries received during the Promotional Period. The sponsor will utilize an Editorial Panel to vote on the best video submissions. Editorial Panel members are not eligible to participate in the Award. Entries will be ranked based on three (3) criteria:

- Presentation of Technical Content

- Quality of Video

Upon selecting a winner, the Sponsor will notify the winner via email. All potential winners will be notified via their email provided to the sponsor. Potential winners will have five (5) business days to respond after receiving initial prize notification or the prize may be forfeited and awarded to an alternate winner. Potential winners may be required to sign an affidavit of eligibility, a liability release, and a publicity release. If requested, these documents must be completed, signed, and returned within ten (10) business days from the date of issuance or the prize will be forfeited and may be awarded to an alternate winner. If prize or prize notification is returned as undeliverable or in the event of noncompliance with these Official Rules, prize will be forfeited and may be awarded to an alternate winner.

- General Prize Restrictions: No prize substitutions or transfer of prize permitted, except by the Sponsor. Import/Export taxes, VAT and country taxes on prizes are the sole responsibility of winners. Acceptance of a prize constitutes permission for the Sponsor and its designees to use winner’s name and likeness for advertising, promotional and other purposes in any and all media now and hereafter known without additional compensation unless prohibited by law. Winner acknowledges that neither Sponsor, Award Entities nor their directors, employees, or agents, have made nor are in any manner responsible or liable for any warranty, representation, or guarantee, express or implied, in fact or in law, relative to any prize, including but not limited to its quality, mechanical condition or fitness for a particular purpose. Any and all warranties and/or guarantees on a prize (if any) are subject to the respective manufacturers’ terms therefor, and winners agree to look solely to such manufacturers for any such warranty and/or guarantee.

11.Release, Publicity, and Privacy : By receipt of the Prize and/or, if requested, by signing an affidavit of eligibility and liability/publicity release, the Prize Winner consents to the use of his or her name, likeness, business name and address by Sponsor for advertising and promotional purposes, including but not limited to on Sponsor’s social media pages, without any additional compensation, except where prohibited. No entries will be returned. All entries become the property of Sponsor. The Prize Winner agrees to release and hold harmless Sponsor and its officers, directors, employees, affiliated companies, agents, successors and assigns from and against any claim or cause of action arising out of participation in the Award.

Sponsor assumes no responsibility for computer system, hardware, software or program malfunctions or other errors, failures, delayed computer transactions or network connections that are human or technical in nature, or for damaged, lost, late, illegible or misdirected entries; technical, hardware, software, electronic or telephone failures of any kind; lost or unavailable network connections; fraudulent, incomplete, garbled or delayed computer transmissions whether caused by Sponsor, the users, or by any of the equipment or programming associated with or utilized in this Award; or by any technical or human error that may occur in the processing of submissions or downloading, that may limit, delay or prevent an entrant’s ability to participate in the Award.

Sponsor reserves the right, in its sole discretion, to cancel or suspend this Award and award a prize from entries received up to the time of termination or suspension should virus, bugs or other causes beyond Sponsor’s control, unauthorized human intervention, malfunction, computer problems, phone line or network hardware or software malfunction, which, in the sole opinion of Sponsor, corrupt, compromise or materially affect the administration, fairness, security or proper play of the Award or proper submission of entries. Sponsor is not liable for any loss, injury or damage caused, whether directly or indirectly, in whole or in part, from downloading data or otherwise participating in this Award.

Representations and Warranties Regarding Entries: By submitting an Entry, you represent and warrant that your Entry does not and shall not comprise, contain, or describe, as determined in Sponsor’s sole discretion: (A) false statements or any misrepresentations of your affiliation with a person or entity; (B) personally identifying information about you or any other person; (C) statements or other content that is false, deceptive, misleading, scandalous, indecent, obscene, unlawful, defamatory, libelous, fraudulent, tortious, threatening, harassing, hateful, degrading, intimidating, or racially or ethnically offensive; (D) conduct that could be considered a criminal offense, could give rise to criminal or civil liability, or could violate any law; (E) any advertising, promotion or other solicitation, or any third party brand name or trademark; or (F) any virus, worm, Trojan horse, or other harmful code or component. By submitting an Entry, you represent and warrant that you own the full rights to the Entry and have obtained any and all necessary consents, permissions, approvals and licenses to submit the Entry and comply with all of these Official Rules, and that the submitted Entry is your sole original work, has not been previously published, released or distributed, and does not infringe any third-party rights or violate any laws or regulations.

12.Disputes: EACH ENTRANT AGREES THAT: (1) ANY AND ALL DISPUTES, CLAIMS, AND CAUSES OF ACTION ARISING OUT OF OR IN CONNECTION WITH THIS AWARD, OR ANY PRIZES AWARDED, SHALL BE RESOLVED INDIVIDUALLY, WITHOUT RESORTING TO ANY FORM OF CLASS ACTION, PURSUANT TO ARBITRATION CONDUCTED UNDER THE COMMERCIAL ARBITRATION RULES OF THE AMERICAN ARBITRATION ASSOCIATION THEN IN EFFECT, (2) ANY AND ALL CLAIMS, JUDGMENTS AND AWARDS SHALL BE LIMITED TO ACTUAL OUT-OF-POCKET COSTS INCURRED, INCLUDING COSTS ASSOCIATED WITH ENTERING THIS AWARD, BUT IN NO EVENT ATTORNEYS’ FEES; AND (3) UNDER NO CIRCUMSTANCES WILL ANY ENTRANT BE PERMITTED TO OBTAIN AWARDS FOR, AND ENTRANT HEREBY WAIVES ALL RIGHTS TO CLAIM, PUNITIVE, INCIDENTAL, AND CONSEQUENTIAL DAMAGES, AND ANY OTHER DAMAGES, OTHER THAN FOR ACTUAL OUT-OF-POCKET EXPENSES, AND ANY AND ALL RIGHTS TO HAVE DAMAGES MULTIPLIED OR OTHERWISE INCREASED. ALL ISSUES AND QUESTIONS CONCERNING THE CONSTRUCTION, VALIDITY, INTERPRETATION AND ENFORCEABILITY OF THESE OFFICIAL RULES, OR THE RIGHTS AND OBLIGATIONS OF ENTRANT AND SPONSOR IN CONNECTION WITH THE AWARD, SHALL BE GOVERNED BY, AND CONSTRUED IN ACCORDANCE WITH, THE LAWS OF THE STATE OF NEW JERSEY, WITHOUT GIVING EFFECT TO ANY CHOICE OF LAW OR CONFLICT OF LAW, RULES OR PROVISIONS (WHETHER OF THE STATE OF NEW JERSEY OR ANY OTHER JURISDICTION) THAT WOULD CAUSE THE APPLICATION OF THE LAWS OF ANY JURISDICTION OTHER THAN THE STATE OF NEW JERSEY. SPONSOR IS NOT RESPONSIBLE FOR ANY TYPOGRAPHICAL OR OTHER ERROR IN THE PRINTING OF THE OFFER OR ADMINISTRATION OF THE AWARD OR IN THE ANNOUNCEMENT OF THE PRIZES.

- Limitation of Liability: The Sponsor, Award Entities and their respective parents, affiliates, divisions, licensees, subsidiaries, and advertising and promotion agencies, and each of the foregoing entities’ respective employees, officers, directors, shareholders and agents (the “Released Parties”) are not responsible for incorrect or inaccurate transfer of entry information, human error, technical malfunction, lost/delayed data transmissions, omission, interruption, deletion, defect, line failures of any telephone network, computer equipment, software or any combination thereof, inability to access web sites, damage to a user’s computer system (hardware and/or software) due to participation in this Award or any other problem or error that may occur. By entering, participants agree to release and hold harmless the Released Parties from and against any and all claims, actions and/or liability for injuries, loss or damage of any kind arising from or in connection with participation in and/or liability for injuries, loss or damage of any kind, to person or property, arising from or in connection with participation in and/or entry into this Award, participation is any Award-related activity or use of any prize won. Entry materials that have been tampered with or altered are void. If for any reason this Award is not capable of running as planned, or if this Award or any website associated therewith (or any portion thereof) becomes corrupted or does not allow the proper playing of this Award and processing of entries per these rules, or if infection by computer virus, bugs, tampering, unauthorized intervention, affect the administration, security, fairness, integrity, or proper conduct of this Award, Sponsor reserves the right, at its sole discretion, to disqualify any individual implicated in such action, and/or to cancel, terminate, modify or suspend this Award or any portion thereof, or to amend these rules without notice. In the event of a dispute as to who submitted an online entry, the entry will be deemed submitted by the authorized account holder the email address submitted at the time of entry. “Authorized Account Holder” is defined as the person assigned to an email address by an Internet access provider, online service provider or other organization responsible for assigning email addresses for the domain associated with the email address in question. Any attempt by an entrant or any other individual to deliberately damage any web site or undermine the legitimate operation of the Award is a violation of criminal and civil laws and should such an attempt be made, the Sponsor reserves the right to seek damages and other remedies from any such person to the fullest extent permitted by law. This Award is governed by the laws of the State of New Jersey and all entrants hereby submit to the exclusive jurisdiction of federal or state courts located in the State of New Jersey for the resolution of all claims and disputes. Facebook, LinkedIn, Twitter, G+, YouTube, IEEE Xplore , and IEEE TV are not sponsors nor affiliated with this Award.

- Award Results and Official Rules: To obtain the identity of the prize winner and/or a copy of these Official Rules, send a self-addressed stamped envelope to Kimberly Rybczynski, IEEE, 445 Hoes Lane, Piscataway, NJ 08854-4141 USA.

- IEEE Xplore Digital Library

- IEEE Standards

- IEEE Spectrum

Publications

IEEE Talks Big Data - Check out our new Q&A article series with big Data experts!

Call for Papers - Check out the many opportunities to submit your own paper. This is a great way to get published, and to share your research in a leading IEEE magazine!

Publications - See the list of various IEEE publications related to big data and analytics here.

Call for Blog Writers!

IEEE Cloud Computing Community is a key platform for researchers, academicians and industry practitioners to share and exchange ideas regarding cloud computing technologies and services, as well as identify the emerging trends and research topics that are defining the future direction of cloud computing. Come be part of this revolution as we invite blog posts in this regard and not limited to the list provided below:

- Cloud Deployment Frameworks

- Cloud Architecture

- Cloud Native Design Patterns

- Testing Services and Frameworks

- Storage Architectures

- Big Data and Analytics

- Internet of Things

- Virtualization techniques

- Legacy Modernization

- Security and Compliance

- Pricing Methodologies

- Service Oriented Architecture

- Microservices

- Container Technology

- Cloud Computing Impact and Trends shaping today’s business

- High availability and reliability

Call for Papers

No call for papers at this time.

IEEE Publications on Big Data

Read more at IEEE Computer Society.

IEEE Computer Magazine Special Issue on Big Data Management

- Big Data: Promises and Problems

Connecting the Dots With Big Data

- Better Health Care Through Data

- The Future of Crime Prevention

- Census and Sensibility

- Landing a Job in Big Data

Read more at The Institute.

Download full issue. (PDF, 5 MB)

IEEE Internet Computing July/August 2014

Web-Scale Datacenters

This issue of Internet Computing surveys issues surrounding Web-scale datacenters, particularly in the areas of cloud provisioning as well as networking optimization and configuration. They include workload isolation, recovery from transient server availability, network configuration, virtual networking, and content distribution.

Read more at IEEE Computer Society .

Networking for Big Data

The most current information for communications professionals involved with the interconnection of computing systems, this bimonthly magazine covers all aspects of data and computer communications.

Read more at IEEE Communications Society .

Special Issue on Big Data

Big data is transforming our lives, but it is also placing an unprecedented burden on our compute infrastructure. As data expansion rates outpace Moore's law and supply voltage scaling grinds to a halt, the IT industry is being challenged in its ability to effectively store, process, and serve the growing volumes of data. Delivering on the premise of big data in the postDennard era calls for specialization and tight integration across the system stack, with the aim of maximizing energy efficiency, performance scalability, resilience, and security.

data science Recently Published Documents

Total documents.

- Latest Documents

- Most Cited Documents

- Contributed Authors

- Related Sources

- Related Keywords

Assessing the effects of fuel energy consumption, foreign direct investment and GDP on CO2 emission: New data science evidence from Europe & Central Asia

Documentation matters: human-centered ai system to assist data science code documentation in computational notebooks.

Computational notebooks allow data scientists to express their ideas through a combination of code and documentation. However, data scientists often pay attention only to the code, and neglect creating or updating their documentation during quick iterations. Inspired by human documentation practices learned from 80 highly-voted Kaggle notebooks, we design and implement Themisto, an automated documentation generation system to explore how human-centered AI systems can support human data scientists in the machine learning code documentation scenario. Themisto facilitates the creation of documentation via three approaches: a deep-learning-based approach to generate documentation for source code, a query-based approach to retrieve online API documentation for source code, and a user prompt approach to nudge users to write documentation. We evaluated Themisto in a within-subjects experiment with 24 data science practitioners, and found that automated documentation generation techniques reduced the time for writing documentation, reminded participants to document code they would have ignored, and improved participants’ satisfaction with their computational notebook.

Data science in the business environment: Insight management for an Executive MBA

Adventures in financial data science, gecoagent: a conversational agent for empowering genomic data extraction and analysis.

With the availability of reliable and low-cost DNA sequencing, human genomics is relevant to a growing number of end-users, including biologists and clinicians. Typical interactions require applying comparative data analysis to huge repositories of genomic information for building new knowledge, taking advantage of the latest findings in applied genomics for healthcare. Powerful technology for data extraction and analysis is available, but broad use of the technology is hampered by the complexity of accessing such methods and tools. This work presents GeCoAgent, a big-data service for clinicians and biologists. GeCoAgent uses a dialogic interface, animated by a chatbot, for supporting the end-users’ interaction with computational tools accompanied by multi-modal support. While the dialogue progresses, the user is accompanied in extracting the relevant data from repositories and then performing data analysis, which often requires the use of statistical methods or machine learning. Results are returned using simple representations (spreadsheets and graphics), while at the end of a session the dialogue is summarized in textual format. The innovation presented in this article is concerned with not only the delivery of a new tool but also our novel approach to conversational technologies, potentially extensible to other healthcare domains or to general data science.

Differentially Private Medical Texts Generation Using Generative Neural Networks

Technological advancements in data science have offered us affordable storage and efficient algorithms to query a large volume of data. Our health records are a significant part of this data, which is pivotal for healthcare providers and can be utilized in our well-being. The clinical note in electronic health records is one such category that collects a patient’s complete medical information during different timesteps of patient care available in the form of free-texts. Thus, these unstructured textual notes contain events from a patient’s admission to discharge, which can prove to be significant for future medical decisions. However, since these texts also contain sensitive information about the patient and the attending medical professionals, such notes cannot be shared publicly. This privacy issue has thwarted timely discoveries on this plethora of untapped information. Therefore, in this work, we intend to generate synthetic medical texts from a private or sanitized (de-identified) clinical text corpus and analyze their utility rigorously in different metrics and levels. Experimental results promote the applicability of our generated data as it achieves more than 80\% accuracy in different pragmatic classification problems and matches (or outperforms) the original text data.

Impact on Stock Market across Covid-19 Outbreak

Abstract: This paper analysis the impact of pandemic over the global stock exchange. The stock listing values are determined by variety of factors including the seasonal changes, catastrophic calamities, pandemic, fiscal year change and many more. This paper significantly provides analysis on the variation of listing price over the world-wide outbreak of novel corona virus. The key reason to imply upon this outbreak was to provide notion on underlying regulation of stock exchanges. Daily closing prices of the stock indices from January 2017 to January 2022 has been utilized for the analysis. The predominant feature of the research is to analyse the fact that does global economy downfall impacts the financial stock exchange. Keywords: Stock Exchange, Matplotlib, Streamlit, Data Science, Web scrapping.

Information Resilience: the nexus of responsible and agile approaches to information use

AbstractThe appetite for effective use of information assets has been steadily rising in both public and private sector organisations. However, whether the information is used for social good or commercial gain, there is a growing recognition of the complex socio-technical challenges associated with balancing the diverse demands of regulatory compliance and data privacy, social expectations and ethical use, business process agility and value creation, and scarcity of data science talent. In this vision paper, we present a series of case studies that highlight these interconnected challenges, across a range of application areas. We use the insights from the case studies to introduce Information Resilience, as a scaffold within which the competing requirements of responsible and agile approaches to information use can be positioned. The aim of this paper is to develop and present a manifesto for Information Resilience that can serve as a reference for future research and development in relevant areas of responsible data management.

qEEG Analysis in the Diagnosis of Alzheimers Disease; a Comparison of Functional Connectivity and Spectral Analysis

Alzheimers disease (AD) is a brain disorder that is mainly characterized by a progressive degeneration of neurons in the brain, causing a decline in cognitive abilities and difficulties in engaging in day-to-day activities. This study compares an FFT-based spectral analysis against a functional connectivity analysis based on phase synchronization, for finding known differences between AD patients and Healthy Control (HC) subjects. Both of these quantitative analysis methods were applied on a dataset comprising bipolar EEG montages values from 20 diagnosed AD patients and 20 age-matched HC subjects. Additionally, an attempt was made to localize the identified AD-induced brain activity effects in AD patients. The obtained results showed the advantage of the functional connectivity analysis method compared to a simple spectral analysis. Specifically, while spectral analysis could not find any significant differences between the AD and HC groups, the functional connectivity analysis showed statistically higher synchronization levels in the AD group in the lower frequency bands (delta and theta), suggesting that the AD patients brains are in a phase-locked state. Further comparison of functional connectivity between the homotopic regions confirmed that the traits of AD were localized in the centro-parietal and centro-temporal areas in the theta frequency band (4-8 Hz). The contribution of this study is that it applies a neural metric for Alzheimers detection from a data science perspective rather than from a neuroscience one. The study shows that the combination of bipolar derivations with phase synchronization yields similar results to comparable studies employing alternative analysis methods.

Big Data Analytics for Long-Term Meteorological Observations at Hanford Site

A growing number of physical objects with embedded sensors with typically high volume and frequently updated data sets has accentuated the need to develop methodologies to extract useful information from big data for supporting decision making. This study applies a suite of data analytics and core principles of data science to characterize near real-time meteorological data with a focus on extreme weather events. To highlight the applicability of this work and make it more accessible from a risk management perspective, a foundation for a software platform with an intuitive Graphical User Interface (GUI) was developed to access and analyze data from a decommissioned nuclear production complex operated by the U.S. Department of Energy (DOE, Richland, USA). Exploratory data analysis (EDA), involving classical non-parametric statistics, and machine learning (ML) techniques, were used to develop statistical summaries and learn characteristic features of key weather patterns and signatures. The new approach and GUI provide key insights into using big data and ML to assist site operation related to safety management strategies for extreme weather events. Specifically, this work offers a practical guide to analyzing long-term meteorological data and highlights the integration of ML and classical statistics to applied risk and decision science.

Export Citation Format

Share document.

For IEEE Members

Ieee spectrum, follow ieee spectrum, support ieee spectrum, enjoy more free content and benefits by creating an account, saving articles to read later requires an ieee spectrum account, the institute content is only available for members, downloading full pdf issues is exclusive for ieee members, downloading this e-book is exclusive for ieee members, access to spectrum 's digital edition is exclusive for ieee members, following topics is a feature exclusive for ieee members, adding your response to an article requires an ieee spectrum account, create an account to access more content and features on ieee spectrum , including the ability to save articles to read later, download spectrum collections, and participate in conversations with readers and editors. for more exclusive content and features, consider joining ieee ., join the world’s largest professional organization devoted to engineering and applied sciences and get access to all of spectrum’s articles, archives, pdf downloads, and other benefits. learn more about ieee →, join the world’s largest professional organization devoted to engineering and applied sciences and get access to this e-book plus all of ieee spectrum’s articles, archives, pdf downloads, and other benefits. learn more about ieee →, access thousands of articles — completely free, create an account and get exclusive content and features: save articles, download collections, and talk to tech insiders — all free for full access and benefits, join ieee as a paying member., data science news & articles.

Showing 14 posts that have the tag “data-science”

This Startup Is Building the Internet of Underwater Things

Wsense’s innovative networking systems are transforming how we explore ocean environments, deploying data science and ai to fight wildlife trafficking, nyu tandon’s juliana freire is leading a team aimed at using data science to bring down criminals trafficking humans and exotic animals, pfizer’s edge in the covid-19 vaccine race: data science, a year in the life of the data scientists who helped bring pfizer's covid-19 vaccine to the public in record time, engineering bias out of ai, machines that learn the worst human impulses can still relearn, robotics news in your inbox, weekly.

Fully Open Access Topical Journals

A Growing Collection of Gold Fully Open Access (OA) Options

IEEE offers more options than ever to authors with the launch of new gold fully open access journals spanning a wide range of technologies. These journals are significant additions to IEEE’s well-known and respected portfolio of fully open access journals. In addition, many of the journals featured here target an accelerated publication time frame of 10 weeks for most accepted papers to help get your research exposed faster. Visit the publication home page of each title for details.

The fully open access journals are accepting submissions. Please see each journal’s description below for more details. All of the titles are fully compliant with funder mandates including Plan S. All IEEE Open Access titles, current and new, will be hosted on the IEEE Xplore ® platform.

Call for Papers

Submit a paper to an ieee fully open access journal.

IEEE Open Journal of Antennas and Propagation

High-quality, peer reviewed research covering antennas, including analysis, design, development, measurement, standards, and testing; radiation, propagation, and the interaction of electromagnetic waves with discrete and continuous media.

This fully open access journal publishes high-quality, peer reviewed papers covering antennas, including analysis, design, development, measurement, standards, and testing; radiation, propagation, and the interaction of electromagnetic waves with discrete and continuous media; and applications and systems pertinent to antennas, propagation, and sensing, such as applied optics, millimeter-and sub-millimeter-wave techniques, antenna signal processing and control, radio astronomy, and propagation and radiation aspects of terrestrial and space-based communication, including wireless, mobile, satellite, and telecommunications at all frequencies. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Konstantina (Nantia) Nikita Professor National Technical University of Athens, Greece

Learn More and Submit a Paper

IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing

Addresses the growing field of applications in Earth observations and remote sensing and provides a venue for the rapidly expanding special issues that are being sponsored by the IEEE Geosciences and Remote Sensing Society.

The IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing addresses the growing field of applications in Earth observations and remote sensing, and also provides a venue for the rapidly expanding special issues that are being sponsored by the IEEE Geosciences and Remote Sensing Society. The journal draws upon the experience of the highly successful “IEEE Transactions on Geoscience and Remote Sensing” and provide a complementary medium for the wide range of topics in applied earth observations. Papers should address current issues and techniques in applied remote and in situ sensing, their integration, and applied modeling and information creation for understanding the Earth. Applications are for the Earth, oceans and atmosphere. Topics can include observations, derived information such as forecast data, simulated information, data assimilation and Earth information techniques to address science and engineering issues of the Earth system. The technical content of papers must be both new and significant.

IEEE Open Journal of Circuits and Systems

Featuring high-quality peer reviewed research covering the theory, analysis, design, tools, and implementation of circuits and systems.

This fully open access journal publishes high-quality, peer-reviewed papers covering the theory, analysis, design, tools, and implementation of circuits and systems. This includes their theoretical foundations, applications, and architectures, as well as circuits and systems implementation of algorithms for signal and information processing. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Gabriele Manganaro, Ph.D., FIEEE Technology Director Analog Devices, Inc., USA

IEEE Open Journal of the Communications Society

Featuring high-quality peer reviewed research covering science, technology, applications and standards for information organization, collection and transfer using electronic, optical and wireless channels and networks.

As a fully open access journal publishing high-quality peer reviewed papers, IEEE Open Journal of the Communications Society covers science, technology, applications and standards for information organization, collection and transfer using electronic, optical and wireless channels and networks, including but not limited to: Systems and network architecture, control and management; Protocols, software and middleware; Quality of service, reliability and security; Modulation, detection, coding, and signaling; Switching and routing; Mobile and portable communications; Terminals and other end-user devices; Networks for content distribution and distributed computing; and Communications-based distributed resources control. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Octavia A. Dobre, Dipl.-Ing., Ph.D. Professor and Research Chair Memorial University, Canada

IEEE Open Journal of the Computer Society

Forum for rapid publication of open access articles describing high-impact results in all aspects of theory, design, practice, and application relating to computer and information processing science and technology.

The IEEE Open Journal of the Computer Society (OJ-CS) is a rigorously peer-reviewed forum for rapid publication of open access articles describing high-impact results in all areas of interest to the IEEE Computer Society. This new fully open access journal complements existing IEEE Computer Society publications by providing a rapid review cycle and a thorough review of technical articles. It is dedicated to publishing articles on the latest emerging topics and trends in all aspects of computing with a scope that encompasses all aspects of theory, design, practice, and application relating to computer and information processing science and technology. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Dr. Song Guo Department of Computing The Hong Kong Polytechnic University

IEEE Open Journal of Control Systems

Publication of the IEEE Control Systems Society, this journal aims to publish high-quality papers on the theory, design, optimization, and applications of dynamic systems and control.

The IEEE Open Journal of Control Systems covers the theory, design, optimization, and applications of dynamic systems and control. The field integrates elements of sensing, communication, decision and actuation components, as relevant for the analysis, design and operation of dynamic systems and control. The systems considered include: technological, physical, biological, economic, organizational and other entities, and combinations thereof. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Sonia Martínez University of California, San Diego United States

IEEE Data Descriptions

Now Accepting Submissions! This new publication is a peer-reviewed journal that publishes short articles on all aspects of data: data descriptors, data collections, and metadata.

IEEE Data Descriptions is a peer-reviewed journal that publishes short articles on all aspects of data: data descriptors, data collections, and metadata. Its overarching purpose is to promote publicly available datasets (open access or subscription-based access) in support of reproducible science while at the same time bringing insights into the associated dataset, data collection methods, and data quality. The metadata collected provides enhanced dataset discoverability and creates a foundation for future data science tools such as auto-discovery and mashups.

Datasets described in IEEE Data Descriptions must be findable, accessible, interoperable, and reusable. The dataset needs to be of a quality high enough that other researchers can use it for their research experimentation and have some permanence. Articles describing datasets must be comprehensive and follow the outlined sections listed in Author Information. The preference is for data to be stored within IEEE DataPort, however, IEEE Data Descriptions accepts submissions where data is stored at other persistent/permanent locations.

Editor-in-Chief: Stephen Makonin Simon Fraser University Vancouver, Canada

IEEE Journal of the Electron Devices Society

Featuring high quality research in the field of electron and ion devices ranging from fundamentals to applied research.

Featuring high-quality research in the field of electron and ion devices ranging from fundamentals to applied research, this journal provides authors an affordable outlet for rapid publishing and universal access, coupled with superior technical quality.

IEEE Open Journal of Engineering in Medicine and Biology

High-quality research covering the development and application of engineering concepts and methods to biology, medicine and health sciences.

As a fully open access journal publishing high-quality peer reviewed papers, IEEE Open Journal of Engineering in Medicine and Biology covers the development and application of engineering concepts and methods to biology, medicine and health sciences to provide effective solutions to biological, medical and healthcare problems. It encompasses the development of mathematical theories, physical, biological and chemical principles, computational models and algorithms, devices and systems for clinical, industrial and educational applications. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Paolo Bonato Associate Professor Harvard University, USA

IEEE Journal on Exploratory Solid-State Computational Devices and Circuits

Multi-disciplinary research in solid-state circuits using exploratory materials and devices for novel energy efficient computation beyond standard CMOS (Complementary Metal Oxide Semiconductor) technology.

Multi-disciplinary research in solid-state circuits using exploratory materials and devices for novel energy efficient computation beyond standard CMOS (Complementary Metal Oxide Semiconductor) technology. Focus is on the exploration of materials, devices and computation circuits to enable Moore’s Law to continue for computation beyond a 10 to 15 year horizon (beyond end of the roadmap for CMOS technologies) with the associated density scaling and improvement in energy efficiency.

IEEE Open Journal on Immersive Displays

Now Accepting Submissions! New publication will be home to publications in display science and applications.

The IEEE Open Journal on Immersive Displays (OJID) will be home to publications in display science and applications. The field of displays is diverse, ranging from the science and engineering of materials and devices to their application in high definition, form-factor-independent displays featuring interactivity, virtual and augmented reality, and 3D content. Submissions on advanced fabrication processing, thin film active and passive devices, and lifetime and reliability evaluation are welcome when display is the focus or where there is a direct relationship to the nature of the display system. Tutorial and review papers extending the frontiers of immersive display technologies and novel applications are also published.

Editor-in-Chief: Arokia Nathan University of Cambridge Hertfordshire, U.K.

IEEE Journal of Indoor and Seamless Positioning and Navigation

Publishes original research in the fields of localization and tracking of people, robots, and objects.

IEEE Journal of Indoor and Seamless Positioning and Navigation (J-ISPIN) publishes original research in the fields of localization and tracking of people, robots, and objects. It covers all aspects of localization systems, including sensing, communications, location-based services, mapping, protocols, human interfaces and standards. The scope includes methods and systems addressing indoor environments as well as those enabling seamless transition between heterogeneous indoor contexts or between indoor and outdoor environments, for example where Global Navigation Satellites Systems are underperforming or unavailable.

Editor-in-Chief: Valérie Renaudin Senior Researcher University Gustave Eiffel, France

IEEE Open Journal of the Industrial Electronics Society

Featuring high quality research covering the theory and applications of electronics, controls, communications, instrumentation and computational intelligence to industrial and manufacturing systems and processes.

This fully open access journal publishes high-quality, peer-reviewed papers covering the theory and applications of electronics, controls, communications, instrumentation and computational intelligence to industrial and manufacturing systems and processes. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Dr. Leopoldo Garcia Franquelo Professor, Electronics Engineering Universidad de Sevilla, Spain

IEEE Open Journal of Industry Applications

Covering the advancement of the theory and practice of electrical and electronic engineering in the development, design, manufacture and application of electrical systems, apparatus, devices, and controls to the processes and equipment of industry and commerce.

As a fully open access journal publishing high-quality peer reviewed papers, IEEE Open Journal of Industry Applications covers the advancement of the theory and practice of electrical and electronic engineering in the development, design, manufacture and application of electrical systems, apparatus, devices, and controls to the processes and equipment of industry and commerce; the promotion of safe, reliable, and economic installations; industry leadership in energy conservation and environmental, health, and safety issues; the creation of voluntary engineering standards and recommended practices; and the professional development of its readers. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Professor Pericle Zanchetta Fellow IEEE Faculty of Engineering University of Nottingham, UK

IEEE Open Journal of Instrumentation and Measurement

Publication of the Instrumentation and Measurement Society, this journal publishes papers on the science, technology, and application of instrumentation and measurement.

The IEEE Open Journal of Instrumentation and Measurement publishes papers on the science, technology, and application of instrumentation and measurement. Instrumentation and measurement, in the current context of the IEEE IMS community, consists of methods, instruments, systems, and applications for measurement, detection, tracking, monitoring, characterization, identification, sensing, estimation, recognition, or diagnosis of a physical phenomenon; or metrology and measurement theory including measurement uncertainty, instrument precision, calibration, etc.

Editor-in-Chief: Shervin Shirmohammadi University of Ottawa Canada

IEEE Open Journal of Intelligent Transportation Systems

Featuring high-quality research covering the theoretical, experimental and operational aspects of electrical and electronics engineering and information technologies as applied to Intelligent Transportation Systems (ITS).

As a fully open access journal publishing high-quality peer reviewed papers, IEEE Open Journal of Intelligent Transportation Systems covers theoretical, experimental and operational aspects of electrical and electronics engineering and information technologies as applied to Intelligent Transportation Systems (ITS), defined as those systems utilizing synergistic technologies and systems engineering concepts to develop and improve transportation systems of all kinds. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Dr. Bart van Arem Full Professor of Transport Modelling Delft University of Technology, The Netherlands

IEEE Transactions on Machine Learning in Communications and Networking

Featuring high-quality manuscripts on advances in machine learning methods for and applications to communications and networking.

The IEEE Transactions on Machine Learning in Communications and Networking publishes high-quality manuscripts on advances in machine learning methods for and applications to communications and networking. Furthermore, articles developing novel communication and networking techniques for distributed machine learning algorithms are of interest. Both theoretical contributions (including new theories, techniques, concepts, algorithms, and analyses) and practical contributions (including system experiments, prototypes, and new applications) are encouraged.

Editor-in-Chief: Walid Saad Professor Virginia Tech Research Center – Arlington, USA

IEEE Journal of Microwaves

Covering articles on the theory, techniques and applications of guided wave and wireless technologies and spanning the electromagnetic spectrum from RF/microwave through millimeter-waves and terahertz.

The IEEE Journal of Microwaves is a fully open access publication covering the complete scope of the Microwave Theory and Techniques Society which includes articles on the theory, techniques and applications of guided wave and wireless technologies and spanning the electromagnetic spectrum from RF/microwave through millimeter-waves and terahertz, covering the aspects of materials, components, devices, circuits, modules, and systems which involve the generation, modulation, demodulation, control, transmission, sensing and effects of electromagnetic signals.

Editor-in-Chief: Peter H. Siege THz Global, NASA Jet Propulsion Laboratory, California Institute of Technology Pasadena, California

IEEE Open Journal of Nanotechnology

Featuring high-quality, peer reviewed research covering the theory, design, and development of nanotechnology and its scientific, engineering, and industrial applications.

As a fully open access journal publishing high-quality peer reviewed papers, IEEE Open Journal of Nanotechnology covers the theory, design, and development of nanotechnology and its scientific, engineering, and industrial applications. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Co-Editors-in-Chief: Professor Wen J. Li Chair Professor of Biomedical Engineering Associate Provost City University of Hong Kong, Hong Kong

Professor Jin-Woo Kim Professor of Biological Engineering and Nanoscience & Engineering University of Arkansas, USA

Professor Seiji Samukawa Director of Innovative Energy Research Center, Institute of Fluid Science (IFS) Principal Investigator of Advance Institute for Materials Research (AIMR) Tohoku University, Japan

IEEE Transactions on Neural Systems and Rehabilitation Engineering

Covering the rehabilitative and neural aspects of biomedical engineering, including functional electrical stimulation, acoustic dynamics, human performance measurement, and more.

Rehabilitative and neural aspects of biomedical engineering, including functional electrical stimulation, acoustic dynamics, human performance measurement and analysis, nerve stimulation, electromyography, motor control and stimulation; and hardware and software applications for rehabilitation engineering and assistive devices.

IEEE Photonics Journal

Dedicated to the rapid disclosure of research at the forefront of all areas of photonics and addressing issues ranging from fundamental understanding to emerging technologies.

Breakthroughs in the generation of light and its control and utilization have given rise to the field of Photonics: a rapidly expanding area of science and technology with major technological and economic impact. IEEE Photonics Journal is an online-only journal dedicated to the rapid disclosure of top-quality peer-reviewed research at the forefront of all areas of photonics. Contributions addressing issues ranging from fundamental understanding to emerging technologies and applications are within the scope of the Journal.

IEEE Open Access Journal of Power and Energy

High-quality, peer reviewed research covering the development, planning, design, construction, maintenance, installation, and operation of equipment, structures, power systems and usage of electric energy, including its measurement and control.

As a fully open access journal publishing high-quality peer reviewed papers, the IEEE Open Access Journal of Power and Energy publishes articles focused on the development, planning, design, construction, maintenance, installation, and operation of equipment, structures, and power systems for the safe, sustainable, economic, and reliable conversion, generation, transmission, distribution, storage, and usage of electric energy, including its measurement and control. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Fangxing “Fran” Li The University of Tennessee Knoxville, TN 37996 USA [email protected]

IEEE Open Journal of Power Electronics

Covering the development and application of power electronic systems and technologies, which encompass the effective use of electronic components, the application of circuit theory and design techniques and the development of analytical methods and tools.

The IEEE Open Journal of Power Electronics covers the development and application of power electronic systems and technologies, which encompass the effective use of electronic components, the application of circuit theory and design techniques and the development of analytical methods and tools toward efficient electronic conversion, control and conditioning of electric power to enable the sustainable use of energy. As a fully open access journal publishing high-quality peer reviewed papers, the Society’s aim is to publish novel developments as well as tutorial and survey articles including those of value to both the R&D and practicing professionals in the field. The journal peer-review process targets a publication period of 10 weeks from submission to online publication.

Editor-in-Chief: Alan Mantooth, Ph.D., P.E., FIEEE Distinguished Professor of Electrical Engineering University of Arkansas, USA

IEEE Transactions on Privacy

Now Accepting Submissions! New publication will provide a multidisciplinary forum for theoretical, methodological, engineering, and applications aspects of privacy and data protection, including specification, design, implementation, testing, and validation.

The IEEE Transactions on Privacy provides a multidisciplinary forum for theoretical, methodological, engineering, and applications aspects of privacy and data protection, including specification, design, implementation, testing, and validation. Privacy, in this context, is defined as the freedom from unauthorized intrusion in its broadest sense, arising from any activity in information collection, information processing, information dissemination or invasion. The transactions publishes articles reporting significant advances in theoretical models and formalization as well as engineering tools supporting the above activities, design frameworks and languages, architectures, infrastructures, model-based approaches, study cases, and standards.

IEEE Transactions on Quantum Engineering

Publishing regular, review, and tutorial articles based on the engineering applications of quantum phenomena, including quantum computation, information, communication, software, hardware, devices, and metrology.

Publishes regular, review, and tutorial articles based on the engineering applications of quantum phenomena, including quantum computation, information, communication, software, hardware, devices, and metrology. Articles also address quantum-engineering aspects of superconductivity, magnetics, microwave techniques, photonics, and signal processing.

IEEE Journal of Selected Areas in Sensors

Now Accepting Submissions! New publication of the IEEE Sensors Council, this journal publishes papers in all areas of the field of interest of the IEEE Sensors Council.